SEO Automation: How To Automate SEO Tasks With AI Workflows

Most SEO work is repetitive. Keyword research, content briefs, publishing schedules, technical audits, rank tracking, these tasks eat hours every week, and they look roughly the same each time you do them. That's exactly why learning how to automate SEO is one of the highest-leverage moves you can make for your organic growth strategy. Instead of grinding through spreadsheets and toggling between five different tools, you can hand off predictable workflows to AI and automation software that handles them faster and more consistently than any human could.

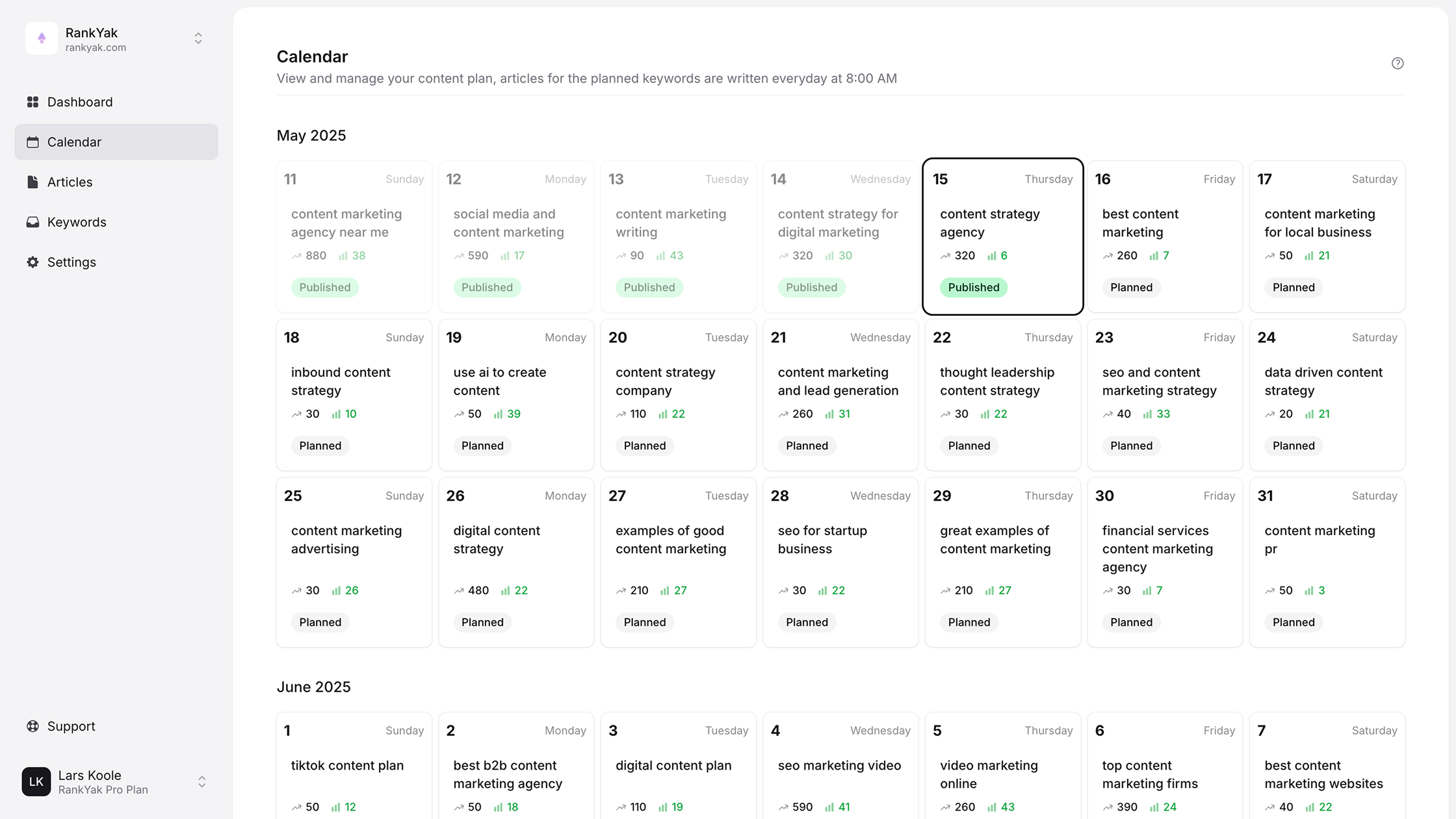

The good news: SEO automation has matured significantly. You're no longer limited to scheduling a few reports. In 2026, you can automate everything from keyword discovery to content creation to publishing, and the output quality is genuinely good enough to rank. That's the exact problem we built RankYak to solve: a single platform that runs your entire SEO content pipeline on autopilot, from finding the right keywords to publishing optimized articles on your site daily.

This guide breaks down the specific SEO tasks you can automate, the tools and AI workflows that make it possible, and a practical framework for building your own automation stack. Whether you want to eliminate busywork from your current process or build a hands-off content engine from scratch, you'll walk away with a clear plan to put your SEO on autopilot without sacrificing quality.

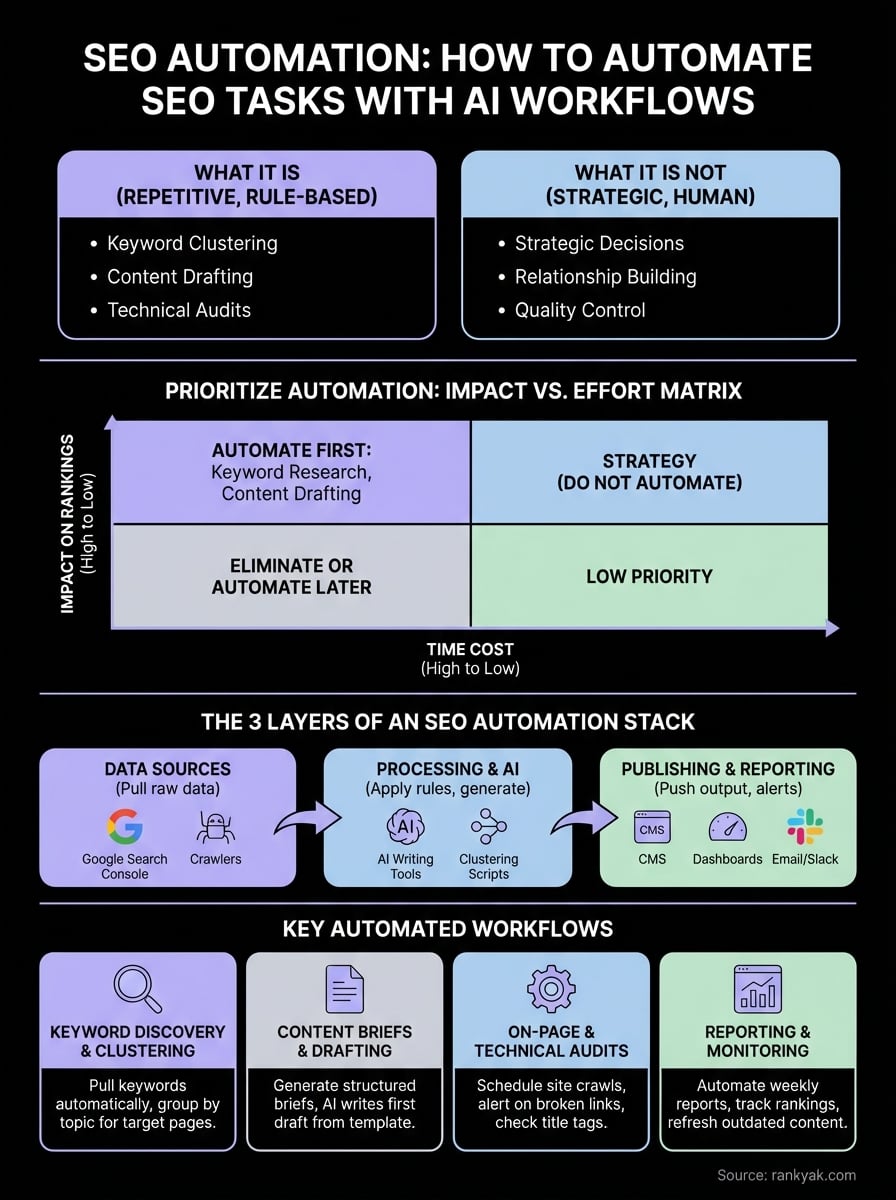

What SEO automation is and what it is not

SEO automation means using software, AI, or scripted workflows to handle repetitive, rule-based SEO tasks that would otherwise require hours of manual effort. Think keyword clustering, content drafting, rank tracking, internal link audits, and publishing schedules. When you learn how to automate SEO, you're not cutting corners; you're removing the mechanical parts of the process so you can focus on strategy and decisions that actually require human judgment.

What automation handles well

Automation excels at tasks that follow a predictable pattern. If a task involves pulling data, organizing it by a set of rules, and outputting a result, a machine can do it faster and more consistently than you can. Keyword research is a clear example: tools can crawl search data, cluster related terms by topic, and score them by difficulty and volume in seconds. That same logic applies to technical audits, where a crawler checks hundreds of pages for broken links, missing metadata, slow load times, and redirect chains without any human in the loop.

Content creation has also entered this category in a meaningful way. Modern AI can take a keyword, research the top-ranking pages, identify content gaps, and produce a fully structured, SEO-optimized draft that you can publish directly or with light edits. Reporting is another strong use case: automated dashboards pull live data from Google Search Console and analytics platforms so you always know where traffic is growing and where it is dropping, without manually exporting spreadsheets every week.

The more predictable and rule-based a task is, the stronger a candidate it is for automation.

What automation cannot replace

Automation is not a replacement for strategic thinking or brand judgment. A tool can tell you that a keyword has 8,000 monthly searches and low competition. It cannot tell you whether that keyword fits your brand positioning, whether your audience actually cares about the topic, or whether writing about it would undermine your authority in your niche. Those calls still belong to you.

Similarly, relationship-based work like building genuine editorial backlinks, pitching journalists, or creating content that sparks real conversations in your industry requires a human touch. You can automate the research and outreach templates, but the relationships themselves are not something you can script. The same goes for ranking drops and crisis response: if your traffic falls sharply, you need to understand why and make judgment calls about what to prioritize, not hand that decision to an algorithm.

One more boundary worth naming: automation does not guarantee quality by default. An AI that produces 30 articles a week without any oversight or quality control will not help your rankings. Google's helpful content guidelines reward content that demonstrates real expertise, genuine insight, and clear value for readers. That means your automation stack needs human checkpoints built in, even if those checkpoints are brief review passes. The goal is not to remove humans from the process entirely; it is to eliminate the time-wasting mechanical tasks so you can spend your attention where it actually moves the needle.

Choose workflows to automate first

Not every SEO task deserves automation on day one. If you try to automate everything at once, you'll spend more time configuring tools than doing actual SEO. The smarter move is to rank your current tasks by volume and repetition, then start with the ones that consume the most hours and require the least strategic judgment. That's where automation pays off fastest when you're figuring out how to automate SEO in a way that actually sticks long-term.

Start with high-volume, low-judgment tasks

The best candidates for automation are tasks you repeat every week, every month, or for every new piece of content you produce. Keyword research and clustering typically top this list because you run the same process for dozens or hundreds of terms at a time. Rank tracking is another clear winner: pulling rankings manually for even 50 keywords across multiple pages is tedious and easy to get wrong. Content brief generation follows the same logic. Once you have a keyword, building a brief means pulling competitor data, identifying heading structures, and listing related terms, a perfectly repeatable, scriptable workflow that doesn't require any original thinking from you.

If you can describe a task as a series of steps that look identical each time you do them, it is ready to automate.

Use an impact-versus-effort matrix to prioritize

Before you build anything, map your current SEO tasks against two axes: impact on rankings and time cost per execution. Tasks that score high on both are your immediate targets. The table below gives you a concrete starting framework:

| Task | Impact | Time Cost | Automate First? |

|---|---|---|---|

| Keyword research and clustering | High | High | Yes |

| Content drafting and publishing | High | High | Yes |

| Rank tracking and reporting | Medium | High | Yes |

| Technical site crawls | High | Medium | Yes |

| Internal link audits | Medium | Medium | Yes |

| Outreach email templates | Medium | Low | Later |

| Strategy and topic decisions | High | Low | No |

Start with the top four rows and build from there. Trying to automate outreach templates before you've handled keyword research and content drafting is working in the wrong direction. Get the high-volume, high-impact workflows running smoothly first, then expand your stack once those pipelines deliver consistent, reliable output without you needing to babysit them.

Set up your automation stack

Before you automate a single task, you need to know what your stack actually looks like. An SEO automation stack is simply the combination of tools, APIs, and workflow connectors you use to move data and trigger actions without manual input. Most people already have parts of this in place, like a CMS, a rank tracker, or Google Search Console. The goal at this stage is to connect those pieces into a coherent system so data flows between them automatically, and tasks trigger each other in sequence rather than sitting in disconnected silos.

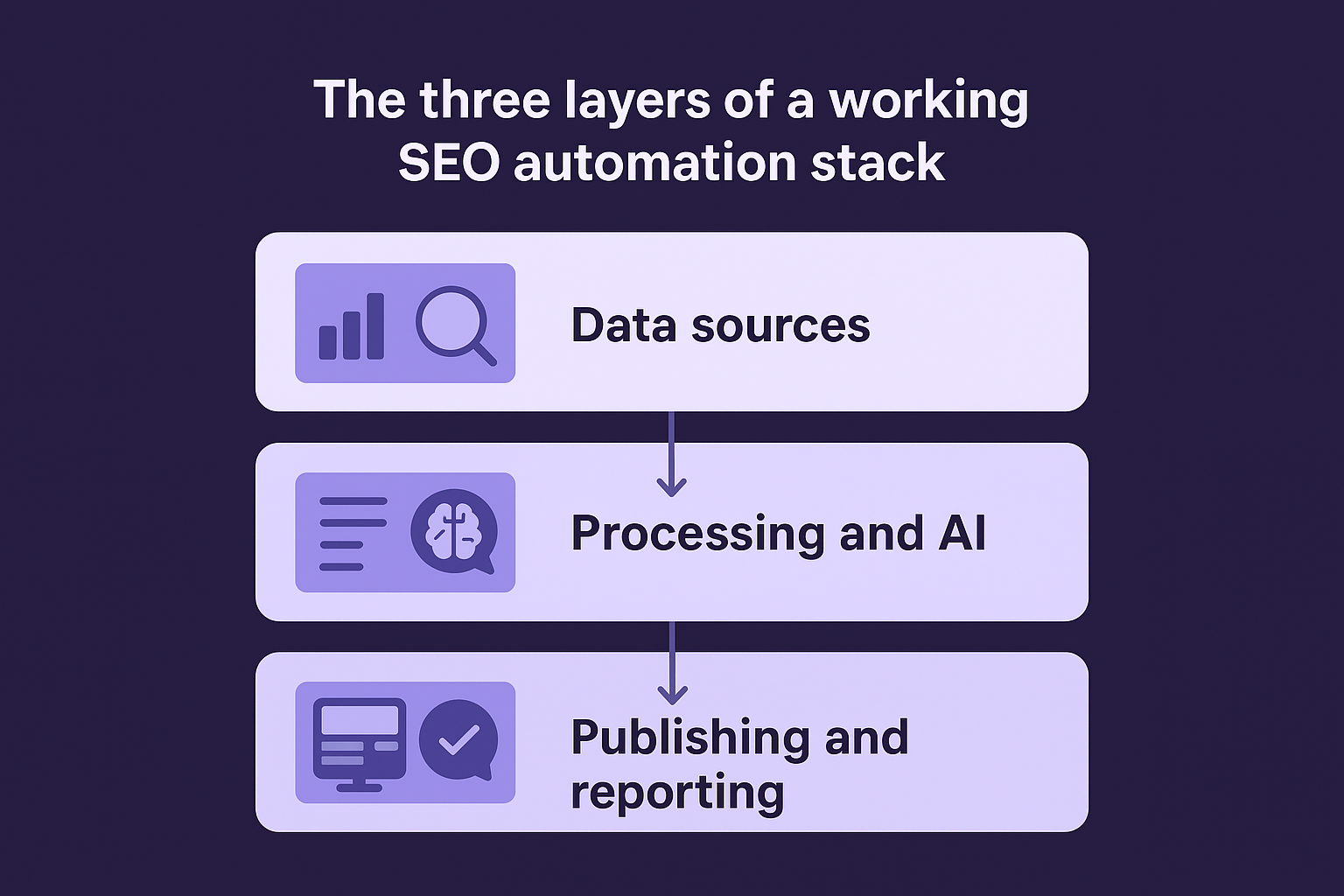

The three layers of a working SEO automation stack

Every functional SEO automation setup breaks down into three layers: data sources, processing and AI, and publishing and reporting. Your data sources pull raw signals like keyword volumes, rankings, and crawl results. Your processing layer applies rules and AI to turn that raw data into briefs, drafts, and decisions. Your publishing layer pushes the output to your CMS, reporting dashboards, or notification systems. When you understand how to automate SEO as a layered system rather than a pile of subscriptions, troubleshooting becomes much easier because you can pinpoint exactly which layer broke when something stops working.

Here is a simple breakdown of the three layers:

| Layer | What it does | Examples |

|---|---|---|

| Data sources | Pulls keywords, rankings, crawl data | Google Search Console, crawl tools |

| Processing and AI | Generates briefs, drafts, clusters | AI writing platforms, clustering scripts |

| Publishing and reporting | Pushes content, sends alerts | WordPress REST API, Slack webhooks, dashboards |

Build your stack layer by layer, not tool by tool, or you will end up with a collection of disconnected subscriptions that never talk to each other.

Connect your tools before you configure anything

The most common mistake when building an automation stack is configuring individual tools in isolation before you have mapped how they connect. Start by writing out your current workflow in plain steps: keyword identified, brief written, draft created, published, tracked. Then identify which tool handles each step and whether those tools offer native integrations or API access with each other.

For example, if your CMS is WordPress, confirm your content tool can push directly to it via the REST API before you spend time building AI prompts or keyword rules. Connecting the plumbing first saves you from rebuilding your entire setup later when you discover two critical tools have no direct connection.

Automate keyword discovery and clustering

Keyword research is one of the most time-consuming parts of SEO, and it is also one of the easiest to automate because the process follows the same steps every single time. When you understand how to automate SEO at the keyword level, you stop spending hours manually digging through search data and instead let tools surface the best opportunities on a schedule. The result is a continuously refreshed keyword list that feeds directly into your content pipeline without requiring you to touch it every week.

Pull keywords from Google Search Console automatically

Your first automated data source should be Google Search Console, which already tracks every query that surfaces your site in search results. Rather than logging in manually to export reports, you can connect the Search Console API to a simple script or workflow tool that pulls your top queries, impressions, and click-through rates on a recurring schedule and dumps them into a spreadsheet or database. This gives you a live feed of keywords you already rank for but may not be maximizing.

Here is a basic Python snippet to pull query data from the Search Console API on a schedule:

from googleapiclient.discovery import build

service = build('searchconsole', 'v1', credentials=creds)

response = service.searchanalytics().query(

siteUrl='https://yoursite.com',

body={

'startDate': '2026-01-01',

'endDate': '2026-03-11',

'dimensions': ['query'],

'rowLimit': 500

}

).execute()

for row in response.get('rows', []):

print(row['keys'][0], row['clicks'], row['impressions'])

Run this script weekly using a cron job or a workflow automation tool and feed the output directly into your keyword tracking sheet without any manual exports.

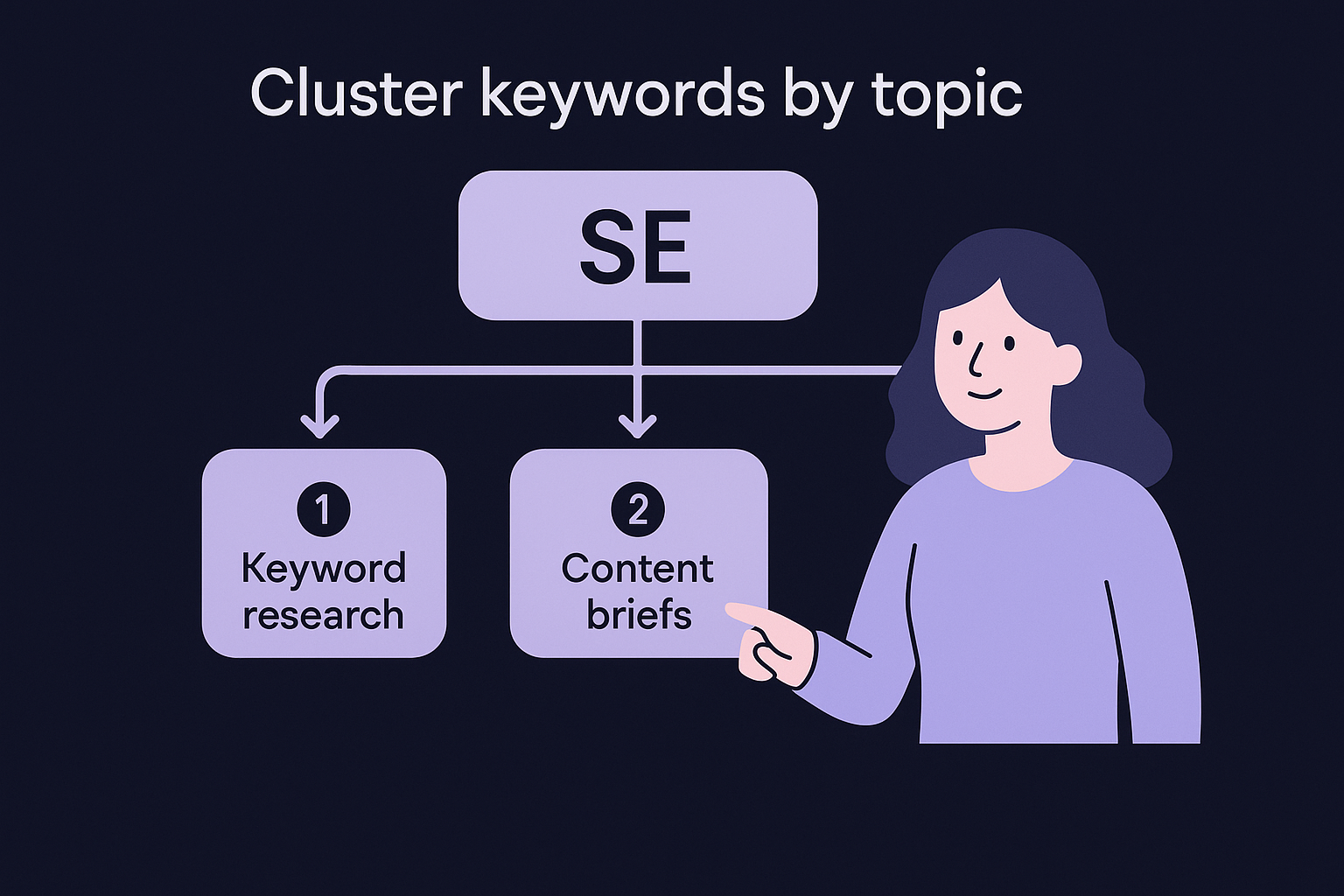

Cluster keywords by topic before you assign them to pages

Raw keyword lists are not useful until you group related terms together. Clustering lets you map each group to a single page instead of accidentally writing ten articles that all target slight variations of the same query and compete with each other. You can automate this step by building a clustering workflow that groups keywords by shared root terms, semantic similarity, or SERP overlap.

One well-clustered keyword group pointing to one well-optimized page will always outperform five fragmented articles chasing the same intent.

A simple clustering output table looks like this:

| Cluster | Primary keyword | Supporting keywords | Target page |

|---|---|---|---|

| SEO automation | how to automate seo | seo automation tools, ai seo workflow | /blog/seo-automation |

| Keyword research | keyword research tools | find keywords, keyword discovery | /blog/keyword-research |

| Content briefs | seo content brief | content brief template, brief generator | /blog/content-briefs |

Build this table automatically by running your raw keyword list through a semantic grouping script or a clustering API, then assign each cluster a target URL before passing it downstream to your content creation workflow.

Automate content briefs and drafting

Once you have a clustered keyword list, the next step in learning how to automate SEO is turning each keyword into a publishable article without doing the research and writing manually. Content briefs and drafting follow a predictable enough structure that you can automate both with a combination of competitor analysis, AI prompting, and a few reusable templates. The key is building a system that takes a keyword as input and produces a draft ready for a quick human review as output, with no manual work in between.

Build a repeatable brief template

A brief is only useful if it includes the right inputs for your AI writing tool. Every brief should contain the target keyword, the search intent, a suggested heading structure, the target word count, and a list of related terms the article should cover. You can generate most of this automatically by analyzing the top-ranking pages for your keyword and extracting their heading patterns, then feeding that data into a fixed template.

Here is a simple brief structure you can populate automatically for each keyword:

| Field | Example |

|---|---|

| Primary keyword | how to automate seo |

| Search intent | Informational, how-to guide |

| Target word count | 2,500 |

| Suggested H2s | What is SEO automation, Tools to use, Step-by-step workflow |

| Related terms to include | AI SEO, keyword clustering, content pipeline |

| Internal links to add | /blog/keyword-research, /blog/seo-tools |

The more specific your brief, the less revision your AI-generated draft will need before it is ready to publish.

Feed briefs directly into an AI drafting workflow

With a filled brief as your input, you can pass it directly into an AI model via API to generate a full draft on demand. The prompt you use matters more than the tool you pick. Structure your prompt to include the brief fields as explicit instructions, specify the tone and format you want, and request Markdown output so it drops cleanly into your CMS without reformatting.

Here is a basic Python example that sends a brief to an AI API and saves the draft:

import openai

brief = {

"keyword": "how to automate seo",

"intent": "how-to guide",

"word_count": 2500,

"headings": ["What is SEO automation", "Tools to use", "Step-by-step workflow"]

}

prompt = f"""

Write a {brief['word_count']}-word {brief['intent']} article targeting

the keyword '{brief['keyword']}'.

Use these H2 headings: {', '.join(brief['headings'])}.

Format the output in Markdown.

"""

response = openai.chat.completions.create(

model="gpt-4o",

messages=[{"role": "user", "content": prompt}]

)

with open("draft.md", "w") as f:

f.write(response.choices[0].message.content)

Run this script whenever a new brief enters your pipeline and your drafts generate automatically, ready for a light review pass before publishing.

Automate on-page updates and internal links

On-page SEO is not a one-time task. Rankings shift, pages drift out of alignment with their target keywords, and internal links that made sense six months ago may now point to outdated or lower-priority content. When you learn how to automate SEO at the on-page level, you stop treating these as manual chores and instead run them as scheduled scripts that flag issues and apply fixes without requiring your attention every week.

Audit and update meta titles and descriptions automatically

Meta titles and descriptions directly affect your click-through rate in search results, and they go stale faster than most people expect. A keyword's search landscape shifts, a competitor changes their angle, or your own page title no longer matches the content below it. You can automate a weekly audit that pulls every page's current title and description via your CMS API, compares them against a set of rules covering length, keyword presence, and uniqueness, and flags every page that falls outside acceptable bounds.

Here is a simple Python snippet that checks title length across a list of your URLs:

import requests

from bs4 import BeautifulSoup

urls = ["https://yoursite.com/page-1", "https://yoursite.com/page-2"]

for url in urls:

res = requests.get(url)

soup = BeautifulSoup(res.text, 'html.parser')

title = soup.find('title').text if soup.find('title') else "Missing"

length = len(title)

status = "OK" if 50 <= length <= 60 else "Review needed"

print(f"{url} | {length} chars | {status}")

Run this script on a weekly schedule and pipe the output to a shared spreadsheet so your team sees every flagged page without running manual checks each time.

Build a repeatable internal link workflow

Internal links distribute authority across your site and signal to search engines how your pages relate to each other. The problem is that most teams add internal links only when they remember to, which means newer pages sit without links pointing to them for weeks. You can fix this by building a simple workflow that cross-references your published URLs against the content of each new draft and suggests relevant anchor text and target URLs before the article goes live. Automating the suggestion step means you can generate this list in seconds by running a script that scans your existing content for keyword matches and outputs link candidates in a standard format, ready for a quick editorial check.

Set a rule that every new article must include at least three internal links before it publishes, and automate the suggestion step so meeting that rule takes under a minute.

The table below shows a format you can populate automatically for every article in your pipeline:

| Source page | Suggested anchor text | Target URL | Status |

|---|---|---|---|

| /blog/seo-automation | keyword clustering | /blog/keyword-research | Add |

| /blog/content-briefs | internal linking guide | /blog/internal-links | Add |

| /blog/rank-tracking | automate seo reports | /blog/seo-reporting | Review |

Automate technical audits and monitoring

Technical SEO problems compound quietly. A broken redirect, a missing canonical tag, or a crawl error on a key landing page can sit undetected for weeks and steadily drain your rankings before you notice the traffic drop. When you understand how to automate SEO at the technical layer, you shift from reactive firefighting to proactive monitoring. Scheduled crawls and automated alerts catch problems the moment they appear, not two months later when the damage already shows up in your analytics.

Schedule automated site crawls

Running a crawl manually is fine once, but your site changes constantly. New pages go live, redirects break, and duplicate content creeps in every time you update your CMS, migrate content, or change URL structures. The fix is to schedule a crawl that runs on a regular cadence and writes results to a file you can diff against the previous run.

The Python snippet below uses the requests library to check a list of your key URLs for HTTP status codes on a schedule:

import requests

import csv

from datetime import date

urls = [

"https://yoursite.com/",

"https://yoursite.com/blog/",

"https://yoursite.com/contact/"

]

results = []

for url in urls:

try:

res = requests.get(url, timeout=10)

results.append({"url": url, "status": res.status_code, "date": date.today()})

except Exception as e:

results.append({"url": url, "status": "Error", "date": date.today()})

with open("crawl_report.csv", "a", newline="") as f:

writer = csv.DictWriter(f, fieldnames=["url", "status", "date"])

writer.writerows(results)

Run this script daily via a cron job and store each output as a dated row in a shared CSV. Comparing today's status codes against yesterday's immediately surfaces any new 404s, 500 errors, or unexpected redirects without manual work.

Set up automated alerts for critical issues

Scheduled crawls generate data, but data sitting in a file does nothing on its own. You need alerts that fire the moment a critical threshold is crossed so you can respond within hours, not weeks. Build a simple monitoring layer on top of your crawl output that checks for specific conditions and sends a notification to your email or a team Slack channel when those conditions are met.

Alerts work best when they are specific enough to require action, not broad enough to become noise you start ignoring.

Below is a quick reference for the conditions worth alerting on and the threshold that should trigger each one:

| Issue | Alert trigger | Priority |

|---|---|---|

| Page returns 404 | Any previously indexed URL | High |

| Page returns 500 | Any URL in crawl list | High |

| Title tag missing | Any page in sitemap | Medium |

| Redirect chain detected | 3 or more hops | Medium |

| Page load time exceeds limit | Over 3 seconds | Medium |

Pair this alert table with a weekly crawl diff that flags any new issues introduced since the last run, and your technical monitoring runs continuously without requiring you to check a dashboard manually.

Automate reporting, refreshes, and links

The final layer of learning how to automate SEO covers the tasks that keep your existing work performing over time. Reporting, content refreshes, and backlink monitoring are easy to skip when you're focused on creating new content, but neglecting them means you lose ground on pages that already rank and miss early warning signs of traffic drops before they show up in your analytics.

Build automated rank and traffic reports

Manual reporting pulls you away from work that actually moves rankings. Instead, connect the Search Console API to a lightweight weekly script that exports your top pages by clicks, your biggest impression gainers, and any queries where your click-through rate dropped more than 10 percent. Feed those outputs into a Google Sheet that updates automatically so your team always has a live view without anyone running exports.

Automated reports are only useful if they highlight what changed, not just what the current numbers are.

The table below shows the key metrics worth pulling automatically each week:

| Metric | Source | Alert condition |

|---|---|---|

| Clicks by page | Search Console API | Drop over 20% week-on-week |

| Average position | Search Console API | Drop over 5 positions |

| Click-through rate | Search Console API | Drop below 2% on ranked pages |

| Organic sessions | Analytics API | Drop over 15% week-on-week |

Schedule content refresh audits

Content decays faster than most people expect. Pages that ranked well 12 months ago often drift down as competitors publish fresher, more comprehensive versions of the same topic. Build a quarterly refresh script that pulls your top 20 pages by impressions, checks their publish date, and flags any page older than six months that has lost more than three positions since its peak. Those flagged pages become your refresh queue, automatically prioritized by traffic impact rather than gut feel.

Each flagged article gets a focused update: add current statistics, expand thin sections, and adjust headings to match current search intent signals from the latest competitor pages. A targeted 30-minute update on a well-ranked article consistently outperforms publishing a brand new page chasing the same keyword.

Automate backlink monitoring

New backlinks and lost backlinks both signal ranking changes before you see them in your traffic data. Connect the Google Search Console Links report to a recurring export script that runs weekly and diffs the current link list against the previous snapshot. Any lost link pointing to a high-value page triggers an automated alert so you can act before the ranking impact compounds.

Next steps to put this on autopilot

You now have a complete framework for how to automate SEO across every major workflow: keyword discovery, content creation, on-page updates, technical audits, and ongoing reporting. The next step is to pick one workflow from this guide and build it this week rather than planning to tackle all of them at once. Start with the task that consumes the most time in your current process, get it running reliably, then layer in the next piece. Each workflow you automate compounds on the previous one, and within a few weeks your SEO pipeline runs largely without you.

If you want to skip the setup work entirely and get a fully automated content pipeline running today, RankYak handles keyword discovery, article writing, and direct publishing to your site every day. You can start a 3-day free trial and have your first article live within minutes of connecting your site.

Get Google and ChatGPT traffic on autopilot.

Start today and generate your first article within 15 minutes.

SEO revenue calculator

How much revenue is your website leaving on the table?

Take a quick quiz and see exactly how much organic revenue you're missing out on, along with personalized tips to fix it.

-

4 questions, under 1 minute

-

See traffic and revenue potential

-

No email required

Free · takes 1 minute · no signup needed

Question 1 of 4

Question 2 of 4

Question 3 of 4

Question 4 of 4

Your SEO growth potential

Extra visitors / month

after 6-12 months of consistent publishing

Revenue potential / year

at your niche's avg. conversion rate

Articles needed (12 mo)

to reach this traffic level

ROI with RankYak

at $99/mo ($1,188/year)

To hit that number, you'd need to:

- Build a topical authority strategy for your niche

- Research keywords & map out a full topical cluster

- Write, edit & publish an article every single day

- Build backlinks to the articles you publish

RankYak handles all of this automatically, every day.

* Estimates based on industry averages. Results vary by niche, competition, and domain authority. Most SEO results become visible after 3-6 months of consistent publishing.