Technical SEO Strategy: A Framework for Crawling & Indexing

You can publish content every single day, target the right keywords, and still watch your pages sit buried in search results. The culprit? Often, it's not your content, it's your site's foundation. A solid technical SEO strategy determines whether search engines can actually find, crawl, and index your pages. Without it, even the best articles become invisible.

Technical SEO covers everything from site architecture and crawl budgets to XML sitemaps and page speed. These aren't glamorous topics, but they're the infrastructure that makes ranking possible. When Google's bots hit broken links, slow load times, or confusing URL structures, they move on. Your content never gets a fair shot. The good news: once you fix these issues, every piece of content you publish has a clear path to the index.

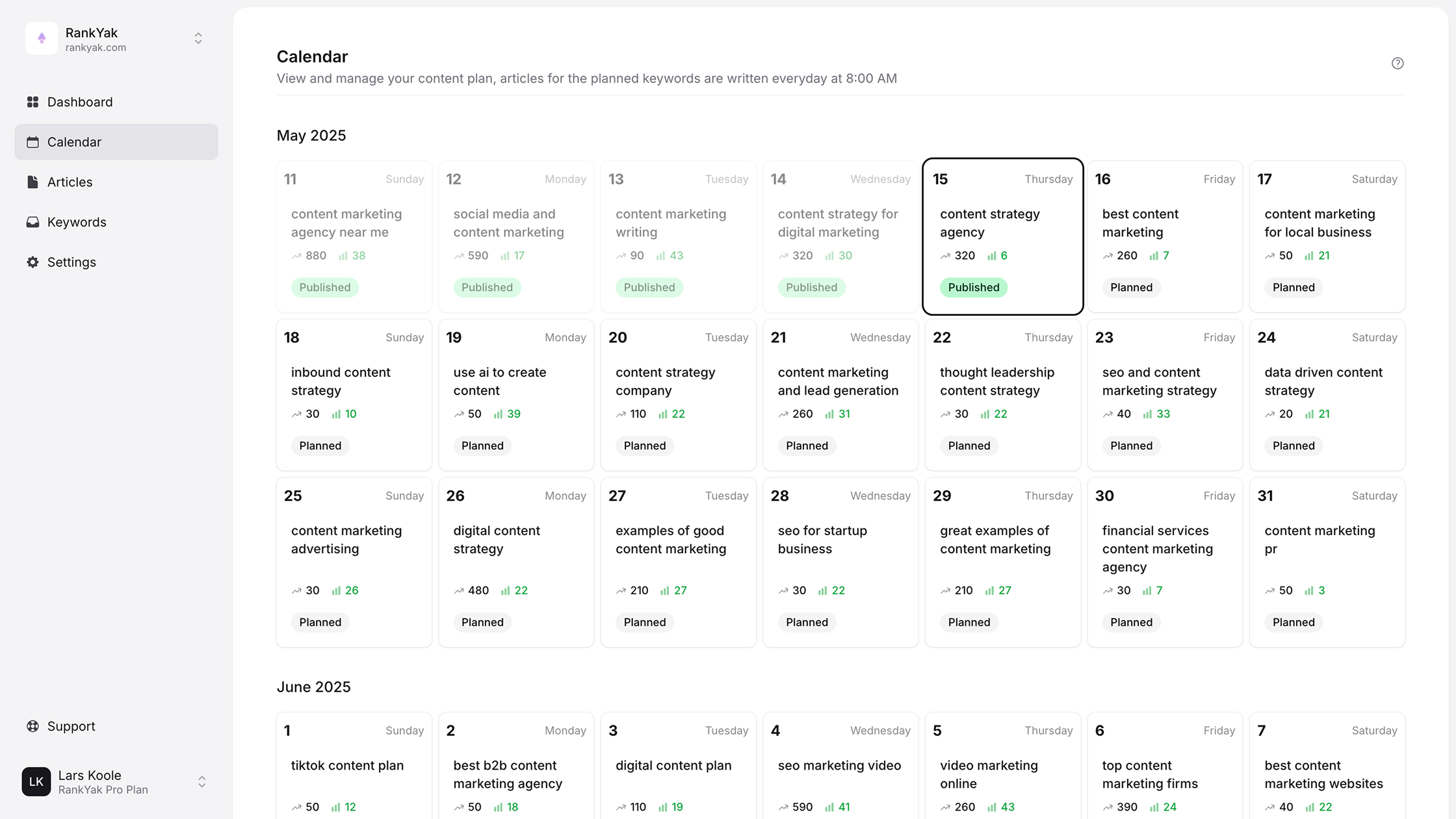

This guide breaks down a practical framework for auditing and improving your site's crawlability and indexation. You'll learn how to identify technical blockers, prioritize fixes, and build a structure that supports long-term organic growth. At RankYak, we automate content creation and publishing, but automation only pays off when your technical foundation is solid. Consider this your blueprint for making sure search engines can actually see what you're building.

Why a technical SEO strategy matters

Search engines operate on a simple principle: they can only rank what they can find, crawl, and index. When your site has technical issues, Google's bots hit roadblocks before they ever evaluate your content quality. You could have the most comprehensive, well-researched articles in your niche, but if search engines can't access them properly, they'll never appear in results. A technical SEO strategy ensures your site's infrastructure supports discoverability instead of sabotaging it.

How technical issues block your content from ranking

Broken internal links create dead ends that prevent bots from discovering new pages. If your site architecture buries important content five or six clicks deep, crawlers may never reach it. Robots.txt misconfigurations can accidentally block entire sections of your site, while missing or incorrect XML sitemaps leave Google guessing about what content exists. Each of these issues acts as a barrier between your content and the index.

JavaScript rendering problems present another common obstacle. When search engines struggle to render JavaScript-heavy pages, they may index incomplete versions or skip the page entirely. Slow server response times trigger timeouts, causing crawlers to abandon pages before they fully load. These technical failures mean your content never gets evaluated on its merits because it never makes it through the discovery phase.

Technical SEO removes the obstacles between your content and search engine visibility.

The real cost of technical SEO neglect

Every site has a crawl budget, the number of pages Google will crawl during each visit. When your site wastes this budget on duplicate URLs, broken pages, or low-value content, fewer important pages get crawled. This becomes critical as your site grows. A site with 10,000 pages but poor technical structure might only have 2,000 pages indexed, while a well-optimized competitor with the same content volume gets full coverage.

Poor technical SEO also compounds over time. Each new piece of content you publish inherits the same structural problems. If your site speed sits at 6 seconds, every article you add creates another slow-loading page. Duplicate content issues multiply. Indexation gaps widen. The gap between your content output and your actual indexed pages grows, meaning you're creating content that never reaches your audience.

Why fixing technical SEO multiplies content ROI

When you solve technical issues, every piece of content you've already published suddenly has a better chance of ranking. Improving site speed benefits all existing pages, not just new ones. Fixing crawl efficiency means your archive gets re-crawled and re-evaluated. Proper URL structures and internal linking help distribute authority across your content library.

This creates a multiplier effect on your content investments. At RankYak, we see this constantly: sites that fix their technical foundation before scaling content production get better results from every article. The same effort that would have been wasted on a poorly-optimized site suddenly drives measurable traffic gains. Your technical infrastructure determines whether you're building on solid ground or sand. Getting this right first means every hour spent on content creation actually moves the needle on rankings and traffic.

The technical SEO strategy framework at a glance

Your technical SEO strategy needs structure, not scattered fixes. Without a clear framework, you end up chasing symptoms instead of solving root causes. A proper approach divides technical work into distinct focus areas, each addressing specific ways search engines interact with your site. This framework covers the complete journey from discovery to ranking readiness.

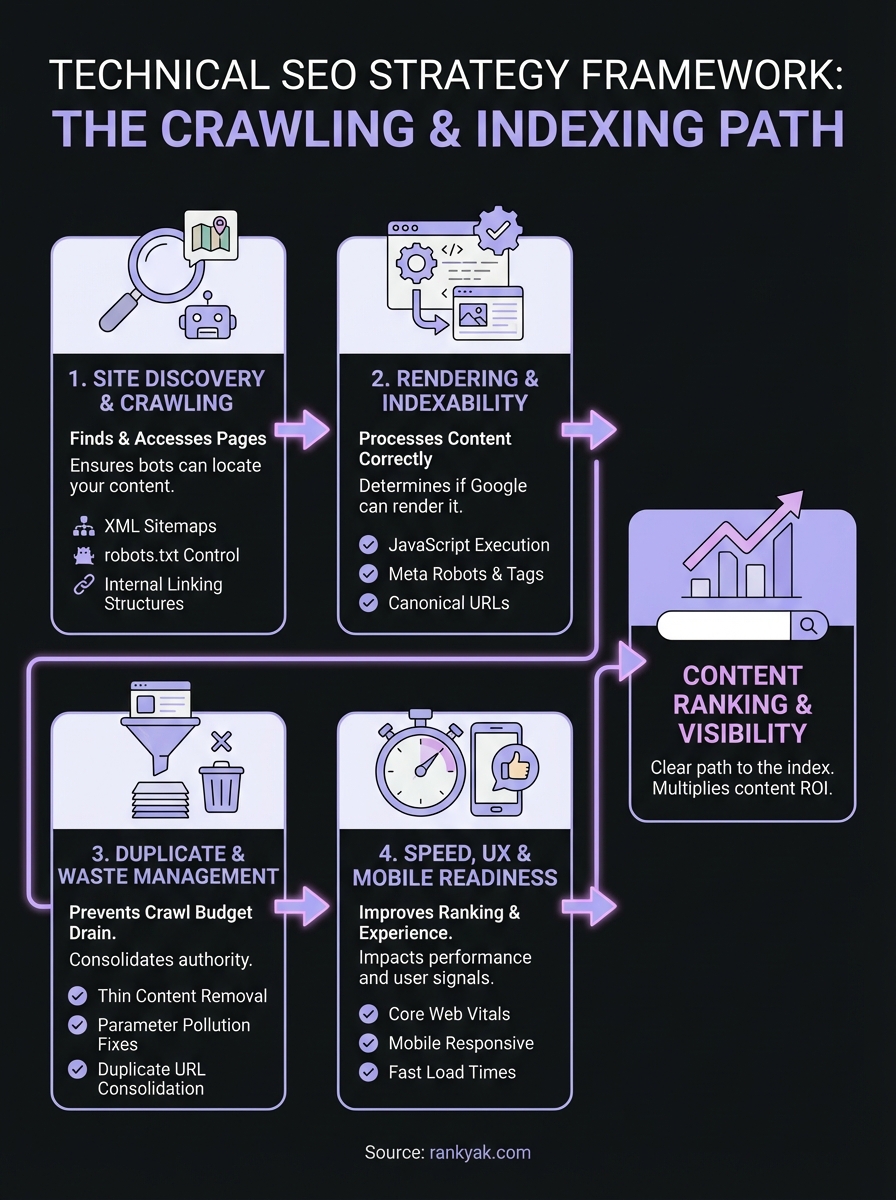

The four core pillars of technical SEO

Every effective technical SEO strategy rests on four foundational pillars. Site discovery and crawling ensures search engines can find and access your pages through proper XML sitemaps, robots.txt configuration, and internal linking. Rendering and indexability determines whether Google can process your content correctly, including JavaScript execution, meta robots tags, and canonical URLs. Duplicate and waste management prevents crawl budget drain by eliminating thin content, parameter pollution, and duplicate URLs. Speed, UX, and mobile readiness covers Core Web Vitals, mobile responsiveness, and server performance that impact both rankings and user experience.

Each pillar addresses a different stage of how search engines process your site. Discovery happens first, crawling next, then rendering, indexing decisions, and finally ranking evaluation. If you neglect any single pillar, you create bottlenecks that limit the effectiveness of everything else.

A technical SEO strategy works systematically through each pillar instead of applying random fixes.

How these pillars connect in practice

These four areas don't operate in isolation. Your crawl budget gets wasted when poor URL structure creates duplicates, which is a crawling issue caused by an architecture problem. Slow page speed delays rendering, which impacts indexability, which affects how quickly new content appears in search results. JavaScript rendering problems often mask indexation issues because bots see incomplete content.

The framework creates a diagnostic sequence. When pages don't rank, you start with discovery: can Google find the page? Then crawling: does anything block access? Next rendering: does Google see complete content? Then indexability: is the page allowed in the index? Finally performance: does the page meet technical quality standards? This systematic approach reveals exactly where breakdowns occur instead of guessing.

Working through each pillar in order builds a foundation that supports long-term growth. You can't optimize what isn't crawled, can't index what isn't rendered, and can't rank what performs poorly. Each fix compounds the effectiveness of your content library, turning technical improvements into sustained visibility gains across your entire site.

How to improve site discovery and crawling

Search engines can't rank content they never find. Your technical SEO strategy starts with making every important page discoverable and accessible to crawlers. This means controlling what gets crawled, ensuring clear pathways to all content, and eliminating obstacles that waste your site's crawl budget. When you optimize discovery and crawling, you give Google the complete picture of your content library instead of forcing it to guess.

Control access with robots.txt and XML sitemaps

Your robots.txt file acts as the first checkpoint for search engine bots. Review it regularly to confirm you're not accidentally blocking important sections. Common mistakes include blocking CSS and JavaScript files that Google needs for rendering, or blocking entire directories that contain valuable content. You can test your robots.txt configuration through Google Search Console to catch these errors before they cause indexation problems.

XML sitemaps provide the roadmap to your content. Submit separate sitemaps for different content types (articles, products, pages) to help Google understand your site structure. Include only indexable URLs and update sitemaps whenever you publish new content or make structural changes. Large sites benefit from sitemap index files that organize multiple sitemaps, keeping each one under the recommended 50,000 URL limit.

Your XML sitemap should contain only URLs you actually want indexed, not every page on your site.

Build crawlable internal linking structures

Every page should sit three clicks or fewer from your homepage. Deep content that requires six or seven clicks rarely gets crawled consistently, especially on larger sites. Your internal linking strategy determines how easily crawlers flow through your content and how authority distributes across pages.

Contextual links within content carry more weight than footer links or sitemaps. When you publish new articles, immediately link to them from relevant existing content to speed up discovery. Avoid orphan pages that have zero internal links pointing to them, as these become invisible to both users and search engines unless they appear in your sitemap.

Optimize crawl budget efficiency

Google allocates a limited crawl budget to each site based on authority and server health. Wasted crawl budget means fewer important pages get indexed. Remove or noindex thin content, parameter-heavy URLs, and duplicate pages that consume crawl resources without adding value.

Monitor your server response times and fix 404 errors that waste crawl budget on dead pages. Use canonical tags to consolidate duplicate versions of content. Redirect chains and loops force crawlers to make multiple requests for a single page, so audit your redirects and eliminate any chains longer than two hops.

How to improve rendering and indexability

Getting pages discovered and crawled means nothing if search engines can't render and index them correctly. Rendering determines whether Google sees your complete content, while indexability controls which pages actually enter the search index. Your technical seo strategy must address both to ensure crawled pages become rankable assets instead of wasted crawl budget.

Ensure JavaScript renders properly

Google executes JavaScript to render modern websites, but this process introduces delays and potential failures. Test your pages with the URL Inspection Tool in Google Search Console to see exactly what Google renders. Compare the rendered HTML to your source code to catch content that disappears during JavaScript execution.

Server-side rendering (SSR) or static site generation eliminates rendering risks by serving fully-formed HTML to crawlers. If you rely on client-side rendering, ensure critical content loads quickly and doesn't depend on user interactions. Lazy-loaded content below the fold sometimes gets indexed, but above-the-fold content must render immediately without JavaScript delays.

Google's rendering queue adds latency, so pages that require JavaScript execution get indexed slower than static HTML.

Configure meta robots and indexing directives

Meta robots tags control whether individual pages enter the index. Check for noindex tags on pages you want ranked, as these completely block indexation regardless of content quality. Developers sometimes add noindex during staging and forget to remove it before launch, creating invisible pages that waste crawl budget.

X-Robots-Tag HTTP headers provide server-level indexing control for non-HTML files like PDFs. Use these alongside meta tags to create layered indexing directives. Avoid conflicting signals where your robots.txt allows crawling but meta tags block indexing, as this creates confusion and unpredictable results.

Set up canonical URLs correctly

Canonical tags tell Google which version of duplicate or similar content to index. Self-referencing canonicals on every page prevent accidental duplicate indexation from URL parameters or session IDs. Point all variations (HTTP/HTTPS, www/non-www, trailing slashes) to your preferred version.

Cross-domain canonicals work when you syndicate content, but they transfer all ranking signals to the canonical page. You lose credit for the content on your own site. Verify that canonical tags point to indexable, accessible URLs because Google ignores canonicals that reference noindexed or redirected pages. Check your implementation through Search Console to catch canonical chains or loops that confuse indexing decisions.

How to prevent duplicate and wasted URLs

Duplicate URLs dilute your authority across multiple pages instead of consolidating it into stronger ranking signals. When search engines find identical or nearly identical content on different URLs, they waste crawl budget evaluating each version and struggle to determine which one deserves to rank. Your technical seo strategy must identify and eliminate these duplicates to focus ranking power on your best pages.

Eliminate parameter-based duplicates

URL parameters create infinite duplicate versions of the same page. Session IDs, tracking codes, and sorting parameters generate unique URLs that display identical content. An ecommerce site with five filter options can produce hundreds of duplicate product listings, each consuming precious crawl budget.

Configure URL parameters in Google Search Console to tell Google how to handle each parameter type. Mark tracking parameters as passive so Google ignores them during crawling. Use canonical tags to point parameter variations back to the clean base URL. For session IDs, switch to cookie-based session management that keeps URLs clean instead of appending identifiers to every link.

Parameter pollution wastes crawl budget faster than almost any other technical issue because each variation looks like a unique page.

Consolidate protocol and subdomain variations

HTTP and HTTPS versions of your site create complete duplicate sets of every page. The same problem occurs with www and non-www subdomains. You need server-level 301 redirects that permanently redirect all variations to your canonical version before search engines waste time crawling duplicates.

Test all four combinations (http://example.com, https://example.com, http://www.example.com, https://www.example.com) to confirm they redirect properly. Trailing slash inconsistencies create additional duplicates where /page and /page/ both load successfully. Configure your server to handle trailing slashes consistently, either always adding them or always removing them, then redirect the non-canonical version to maintain one authoritative URL.

Control faceted navigation and filters

Faceted navigation on ecommerce sites generates exponential duplicate combinations. A product page filtered by color, size, and price range produces dozens of indexed URLs showing the same products in different orders. Search engines index these variations as separate pages, fragmenting your ranking potential across worthless duplicates.

Block filter parameters in robots.txt or use noindex tags on filtered pages to keep them out of the index entirely. Alternatively, implement view=all pages that display complete product sets without filters, then canonical all filtered versions to this comprehensive page. Your goal is one indexable URL per genuinely unique product or category, not hundreds of permutations showing rearranged results.

How to improve speed, UX, and mobile readiness

Page speed, user experience, and mobile optimization directly impact how search engines evaluate your pages for ranking. Google uses Core Web Vitals as confirmed ranking factors, measuring loading performance, interactivity, and visual stability. When your pages load slowly, bounce rates increase and engagement drops, sending negative signals that hurt rankings. Your technical seo strategy must address these performance metrics to compete effectively in modern search results.

Optimize Core Web Vitals metrics

Largest Contentful Paint (LCP) measures how quickly your main content loads, with 2.5 seconds or faster marking good performance. Compress images using WebP format, implement lazy loading for below-fold content, and reduce server response times to improve LCP. First Input Delay (FID) tracks interactivity, requiring under 100 milliseconds for responsive user experiences. Minimize JavaScript execution time and remove unused code that blocks the main thread.

Cumulative Layout Shift (CLS) prevents visual instability by measuring unexpected layout movements. Reserve space for images and ads with explicit width and height attributes so content doesn't jump around during loading. Avoid inserting dynamic content above existing page elements. Test your Core Web Vitals through PageSpeed Insights to identify specific bottlenecks, then prioritize fixes based on which metrics fall outside acceptable ranges.

Core Web Vitals failures prevent otherwise high-quality content from ranking competitively in search results.

Implement mobile-first optimization

Google indexes the mobile version of your site first, making mobile readiness critical for all rankings, not just mobile search. Use responsive design that adapts layout to screen sizes instead of serving separate mobile URLs. Test your pages with Google's Mobile-Friendly Test to catch viewport configuration errors, text sizing problems, and tap target spacing issues.

Mobile users expect faster load times than desktop visitors. Reduce mobile page weight by serving appropriately sized images based on device resolution. Eliminate pop-ups and interstitials that cover content on small screens, as these create terrible user experiences and violate Google's guidelines. Ensure buttons and links have adequate spacing for finger taps, with 48x48 pixel minimum touch targets.

Remove UX friction points

Navigation complexity frustrates users and increases bounce rates. Simplify your menu structure so visitors reach key pages within three taps on mobile devices. Intrusive ads that shift content or block navigation damage both user experience and search rankings. Broken links and missing images create abandonment signals that tell search engines your pages provide poor experiences worth demoting in results.

How to monitor, maintain, and prioritize fixes

Your technical seo strategy doesn't end after implementing fixes. Technical issues resurface as your site grows, platform updates introduce new problems, and competitors optimize faster than you maintain your foundation. Without consistent monitoring and maintenance, improvements degrade over time. You need systematic processes for catching issues early, prioritizing fixes by business impact, and keeping your site technically sound as content volume increases. Reactive troubleshooting costs more time and money than proactive monitoring that prevents problems before they tank your rankings.

Track issues with regular audits

Schedule comprehensive technical audits quarterly to catch degradation before it impacts rankings. Use Google Search Console to monitor crawl errors, indexation drops, and Core Web Vitals failures. The Coverage report shows exactly which pages Google excluded from indexing and why. Server log analysis reveals what crawlers actually access versus what you think they see, exposing wasted crawl budget on low-value URLs.

Automated monitoring tools alert you to sudden changes in site speed, broken links, or indexation counts. Set up weekly checks for critical metrics like total indexed pages, Core Web Vitals scores, and mobile usability errors. Track your XML sitemap submissions to confirm Google processes updates within expected timeframes.

Catching technical issues within days instead of months prevents ranking losses that take months to recover.

Prioritize fixes by impact and effort

Not all technical problems deserve immediate attention. Prioritize based on potential traffic impact versus implementation difficulty. Broken links on high-traffic pages with strong backlink profiles warrant faster fixes than errors buried on rarely-visited pages. Issues preventing indexation of entire sections outweigh minor Core Web Vitals optimizations on individual pages.

Create a simple matrix rating each issue by impact (high/medium/low) and effort (quick/moderate/complex). Tackle high-impact, quick-win fixes first to show measurable improvements fast. Save complex, low-impact optimizations for when you have excess development resources. Security issues and complete indexation blocks always take priority regardless of effort required.

Schedule ongoing maintenance

Technical debt accumulates as developers add features, content grows, and platforms update. Reserve dedicated time monthly for technical maintenance instead of waiting for quarterly audits. Review new pages for proper canonicalization, check that redirects resolve correctly, and confirm new content appears in your sitemap. Platform updates often reset configurations, so verify robots.txt and meta robots settings after major CMS updates to prevent accidental noindex tags or crawl blocks.

Next steps to put this into action

Start by auditing your current technical foundation using Google Search Console and a crawl tool like Screaming Frog. Identify which pillar needs attention first: discovery issues that prevent crawling, rendering problems that block indexation, duplicate URLs that waste crawl budget, or performance failures that hurt rankings. Fix the highest-impact problems before moving to optimization work that shows smaller gains.

Your technical seo strategy works best when paired with consistent content production. Once you remove the barriers preventing indexation, every new article you publish has a clear path to ranking. At RankYak, we handle the content creation and publishing automatically, but we also ensure every article follows technical best practices from day one. Start your free trial to see how automated content generation works on a technically solid foundation. You'll publish optimized articles daily while monitoring maintains your site's crawlability and performance over time.

Get Google and ChatGPT traffic on autopilot.

Start today and generate your first article within 15 minutes.

SEO revenue calculator

How much revenue is your website leaving on the table?

Take a quick quiz and see exactly how much organic revenue you're missing out on, along with personalized tips to fix it.

-

4 questions, under 1 minute

-

See traffic and revenue potential

-

No email required

Free · takes 1 minute · no signup needed

Question 1 of 4

Question 2 of 4

Question 3 of 4

Question 4 of 4

Your SEO growth potential

Extra visitors / month

after 6-12 months of consistent publishing

Revenue potential / year

at your niche's avg. conversion rate

Articles needed (12 mo)

to reach this traffic level

ROI with RankYak

at $99/mo ($1,188/year)

To hit that number, you'd need to:

- Build a topical authority strategy for your niche

- Research keywords & map out a full topical cluster

- Write, edit & publish an article every single day

- Build backlinks to the articles you publish

RankYak handles all of this automatically, every day.

* Estimates based on industry averages. Results vary by niche, competition, and domain authority. Most SEO results become visible after 3-6 months of consistent publishing.