How to Do Technical SEO: Step-by-Step Guide for Beginners

You've published great content, but your pages aren't ranking. The culprit might not be your writing, it's likely what's happening behind the scenes. Understanding how to do technical SEO is the difference between a website Google can easily find and one that gets buried. Technical SEO ensures search engines can crawl, index, and understand your site without friction.

The good news? You don't need to be a developer to get this right. Technical SEO is about following clear steps to fix structural issues that block your rankings. From site speed to XML sitemaps, these foundational elements determine whether your content ever gets a fair shot in search results.

This guide breaks down the essentials into actionable steps you can implement today, even if you're starting from scratch. At RankYak, we automate the content side of SEO so you can focus on what matters, but without solid technical foundations, even the best content struggles to perform. Let's make sure your site is built for search engines to reward.

What technical SEO includes and what you need first

Technical SEO is the backend optimization that makes your website accessible and understandable to search engines. Unlike content SEO (which focuses on keywords and quality writing), technical SEO handles the infrastructure layer: how search engines crawl your pages, whether they can index your content, how fast your site loads, and whether mobile users get a smooth experience. You're essentially building the foundation that allows your content to perform.

Core technical SEO components

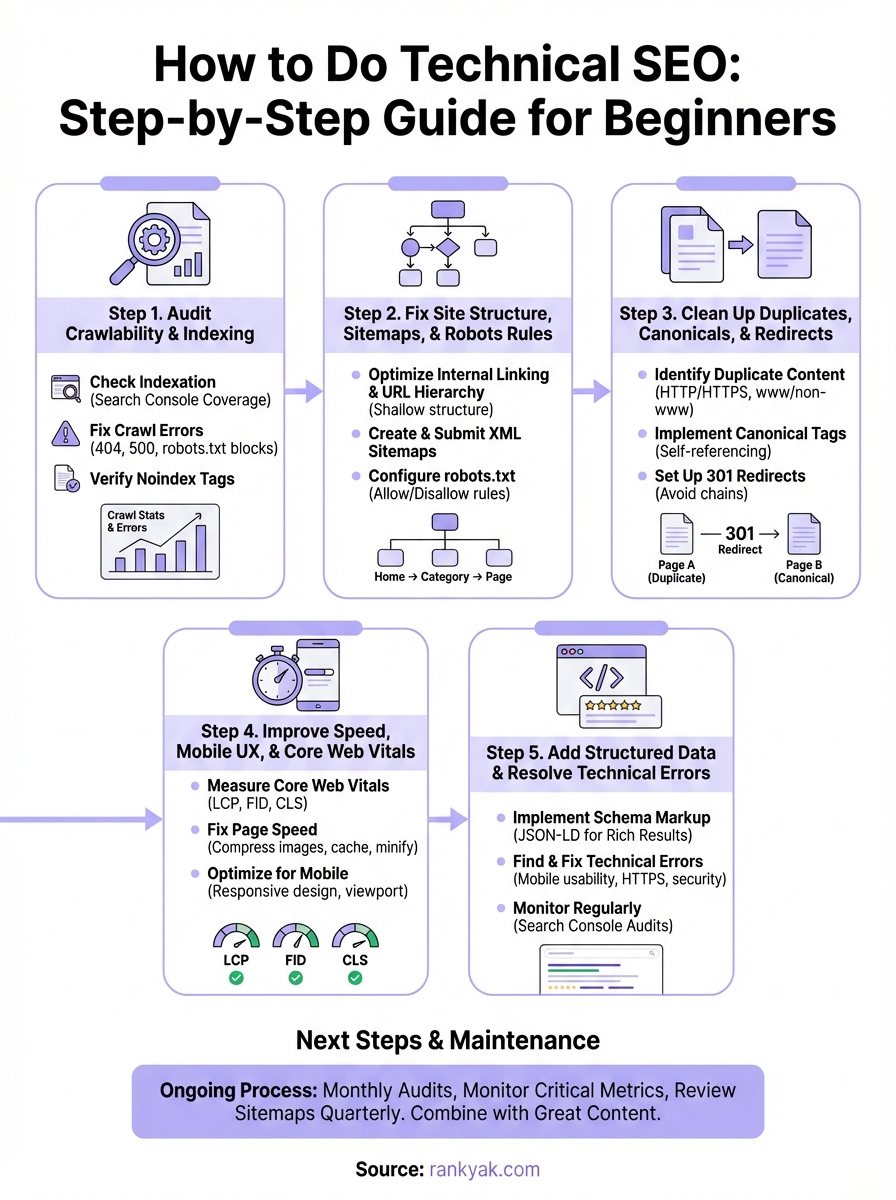

When you learn how to do technical SEO, you're tackling five major pillars that directly impact rankings. Each one addresses a specific way search engines interact with your site.

Crawlability and indexing determine whether Google's bots can find and store your pages. If crawlers get blocked by server errors or misconfigured files, your content never enters the index. Site structure organizes your pages logically through internal linking, URL hierarchy, and XML sitemaps that guide crawlers to your most important content. Duplicate content management ensures you're not competing against yourself with identical pages, using canonicals and redirects to consolidate ranking signals.

Performance optimization covers page speed, Core Web Vitals, and mobile responsiveness, all of which Google weighs heavily in its ranking algorithm. Finally, structured data markup helps search engines understand your content type, whether it's a product, recipe, or article, unlocking rich results in search.

Technical SEO doesn't replace great content. It ensures Google can actually find and rank what you've created.

Here's what each pillar addresses:

| Component | What It Fixes | Impact on Rankings |

|---|---|---|

| Crawlability | Blocked pages, server errors, crawl budget waste | Direct (pages can't rank if not crawled) |

| Site Structure | Orphaned pages, poor navigation, missing sitemaps | Indirect (improves crawl efficiency) |

| Duplicates | Competing URLs, thin content, redirect chains | Direct (consolidates ranking power) |

| Performance | Slow load times, poor mobile UX, failed Core Web Vitals | Direct (Google ranking factor) |

| Structured Data | Missing context, no rich snippets | Indirect (enhances SERP visibility) |

Tools and access you'll need

Before you start optimizing, you need diagnostic tools and site access to identify and fix issues. You can't improve what you can't measure.

Set up Google Search Console first. This free tool shows you indexing status, crawl errors, and mobile usability problems directly from Google's perspective. Pair it with Google PageSpeed Insights to measure Core Web Vitals and get specific recommendations for speed improvements. Both tools give you the baseline data you need to prioritize fixes.

You'll also need access to your website's backend through your CMS (WordPress, Shopify, Webflow) or FTP to edit files like robots.txt and upload sitemaps. Browser extensions like Lighthouse (built into Chrome DevTools) help you audit pages in real time without leaving your browser.

Finally, confirm you can modify your server configuration or work with someone who can. Some technical fixes require adjusting .htaccess files, updating server response codes, or enabling compression. If you're on a managed platform like WordPress.com or Shopify, many of these optimizations happen automatically, but you should still verify settings.

The good news? Most technical SEO work happens through accessible interfaces. You don't need to write code from scratch, you just need to understand what needs fixing and where to make those changes. The steps ahead walk you through exactly that process.

Step 1. Audit crawlability and indexing

Your first task in learning how to do technical SEO is diagnosing whether search engines can access your pages. This audit reveals blocked content, server errors, and indexing problems that prevent your site from appearing in search results. You're checking two things: can Google's crawlers reach your URLs, and are those pages making it into the index?

Check your indexation status in Search Console

Open Google Search Console and navigate to the Coverage report (now called "Pages" in newer versions). This dashboard shows you exactly which URLs Google has indexed, excluded, or flagged with errors. You'll see categories like "Indexed," "Excluded," "Error," and "Valid with warnings." Your goal is to identify pages that should be indexed but aren't.

Look at the "Excluded" section first. Common issues include "Crawled - currently not indexed" (Google found the page but chose not to index it), "Discovered - currently not indexed" (Google knows about it but hasn't crawled it yet), and "Blocked by robots.txt" (you're actively preventing crawling). Each exclusion reason requires a different fix, so document which pages fall into each category.

Run a site: search in Google to compare indexed pages against your actual page count. Type site:yourdomain.com in the search bar. If the result count is significantly lower than your total pages, you have an indexing gap that needs investigation. This quick check confirms whether your Search Console data matches reality.

If Google can't crawl your pages, no amount of content optimization will help them rank.

Find and fix crawl errors

Check the "Crawl Stats" report in Search Console to see how often Google visits your site and whether it encounters problems. Look for spikes in failed requests (4xx or 5xx errors) or drops in crawl frequency. These patterns indicate server instability or configuration issues that block crawlers.

Here are the most common crawl blockers and their fixes:

| Error Type | What It Means | How to Fix |

|---|---|---|

| 404 (Not Found) | Page doesn't exist or URL is broken | Redirect to relevant page or restore content |

| 500 (Server Error) | Your server failed to respond | Contact hosting provider, check server logs |

| Blocked by robots.txt | Crawlers are explicitly denied | Edit robots.txt to allow access |

| Noindex tag | Page tells crawlers not to index it | Remove <meta name="robots" content="noindex"> |

| SSL/HTTPS issues | Certificate problems or mixed content | Install valid SSL, fix insecure resource URLs |

Test your robots.txt file by visiting yourdomain.com/robots.txt in a browser. Make sure you're not accidentally blocking important directories. Use Search Console's robots.txt Tester tool to verify specific URLs aren't being blocked unintentionally.

Review pages with noindex tags by inspecting the HTML source code or using browser extensions like SEO Meta in 1 Click. Remove these tags from any page you want indexed, unless you're intentionally keeping it out of search results (like thank-you pages or internal admin pages).

Step 2. Fix site structure, sitemaps, and robots rules

Once you've confirmed crawlers can reach your site, the next step in how to do technical SEO is organizing your content so search engines understand your site's hierarchy and priorities. This means building a logical internal linking structure, creating XML sitemaps that map your pages, and configuring robots.txt to guide crawler behavior. Poor organization wastes crawl budget and leaves valuable pages buried.

Optimize your internal linking and URL hierarchy

Your site structure should follow a shallow hierarchy where every page is accessible within three clicks from the homepage. This architecture helps both users and crawlers discover content efficiently. Create category pages that organize related content, then link to individual articles or product pages from those hubs. Avoid orphaned pages (pages with no internal links pointing to them) by ensuring every URL is connected through your navigation or contextual links.

Use descriptive URLs that reflect your content hierarchy. A structure like yourdomain.com/category/subcategory/page-name is clearer than yourdomain.com/p=12345. Keep URLs short, lowercase, and separated by hyphens. Update your main navigation to prioritize your most important pages, and add breadcrumb navigation to help users and search engines understand where they are in your site structure.

A well-organized site structure acts as a roadmap for crawlers, ensuring your best content gets found and indexed first.

Create and submit XML sitemaps

An XML sitemap lists all indexable URLs on your site, along with metadata like last modification date and priority. This file tells search engines which pages exist and how they relate to each other. Generate your sitemap using your CMS plugin (Yoast SEO for WordPress, built-in tools for Shopify) or online generators if you have a custom site.

Your basic sitemap XML should look like this:

<?xml version="1.0" encoding="UTF-8"?>

<urlset xmlns="http://www.sitemaps.org/schemas/sitemap/0.9">

<url>

<loc>https://yourdomain.com/page-name/</loc>

<lastmod>2026-03-01</lastmod>

<priority>0.8</priority>

</url>

</urlset>

Upload your sitemap to your root directory (accessible at yourdomain.com/sitemap.xml), then submit it through Google Search Console under the Sitemaps section. Update your sitemap whenever you add or remove pages, and avoid including noindexed URLs, redirects, or error pages.

Configure robots.txt properly

Your robots.txt file controls which parts of your site crawlers can access. This text file lives at yourdomain.com/robots.txt and should allow crawling of your main content while blocking admin areas, duplicate pages, or resource-heavy sections. Here's a basic template:

User-agent: *

Disallow: /admin/

Disallow: /cart/

Disallow: /checkout/

Allow: /

Sitemap: https://yourdomain.com/sitemap.xml

Test changes using Google's robots.txt Tester in Search Console before going live. Never block your entire site accidentally (like Disallow: /), and make sure you're not blocking JavaScript or CSS files that Google needs to render pages properly. Keep the file simple and only block what truly shouldn't be crawled.

Step 3. Clean up duplicates, canonicals, and redirects

Duplicate content confuses search engines about which version of a page to rank, splitting ranking signals across multiple URLs. When you're learning how to do technical SEO, cleaning up these duplicates becomes crucial because Google won't reward multiple identical pages. Instead, it picks one version (often not the one you want) and ignores the rest. This step consolidates your SEO value into the URLs you actually want to rank.

Identify duplicate content issues

Start by running a site crawl using your browser's inspection tools or Google Search Console's URL Inspection feature to find pages with identical or substantially similar content. Common culprits include HTTP vs HTTPS versions of the same page, www vs non-www URLs, trailing slash variations (/page/ vs /page), and parameter-based URLs like ?utm_source=email that create separate pages from the same content.

Check your Search Console Coverage report for duplicate title tags and meta descriptions, which signal potential duplicate content. Look for patterns in your URL structure where multiple paths lead to the same content, like category pages accessible through different navigation routes. Product pages with filters or sorting options often create duplicates unintentionally.

Consolidating duplicate pages with canonical tags preserves your ranking power instead of diluting it across multiple URLs.

Implement canonical tags correctly

The canonical tag tells search engines which version of a page is the primary one you want indexed. Add this tag to the <head> section of duplicate or similar pages, pointing to your preferred URL. Here's the proper format:

<link rel="canonical" href="https://yourdomain.com/preferred-page/" />

Every page should have a self-referencing canonical (pointing to itself) to prevent confusion. Make sure your canonical URLs are absolute paths (full URLs including https://), not relative ones. Avoid canonical chains where Page A points to Page B, which points to Page C. Always point directly to the final destination.

Set up proper redirects

Use 301 redirects (permanent) to send visitors and search engines from old or duplicate URLs to the correct version. These redirects pass nearly all ranking power to the target page. Reserve 302 redirects (temporary) for short-term changes like A/B tests or seasonal campaigns.

Here's a redirect comparison:

| Redirect Type | When to Use | SEO Impact |

|---|---|---|

| 301 (Permanent) | Old URLs, site migrations, duplicate cleanup | Passes ~90-99% of link equity |

| 302 (Temporary) | A/B tests, temporary moves | Doesn't pass full link equity |

| Meta refresh | Avoid if possible (slow, poor UX) | Minimal SEO value |

Fix redirect chains where one redirect leads to another. Google may stop following after 3-5 hops, wasting crawl budget. Update internal links to point directly to final destinations instead of relying on redirects.

Step 4. Improve speed, mobile UX, and Core Web Vitals

Page speed directly impacts rankings and user experience. Google uses Core Web Vitals (LCP, FID, CLS) as ranking factors, measuring how quickly your content loads, how fast it becomes interactive, and whether elements shift unexpectedly during loading. When you master how to do technical SEO, speed optimization becomes one of your highest-impact activities because it affects both search visibility and conversion rates.

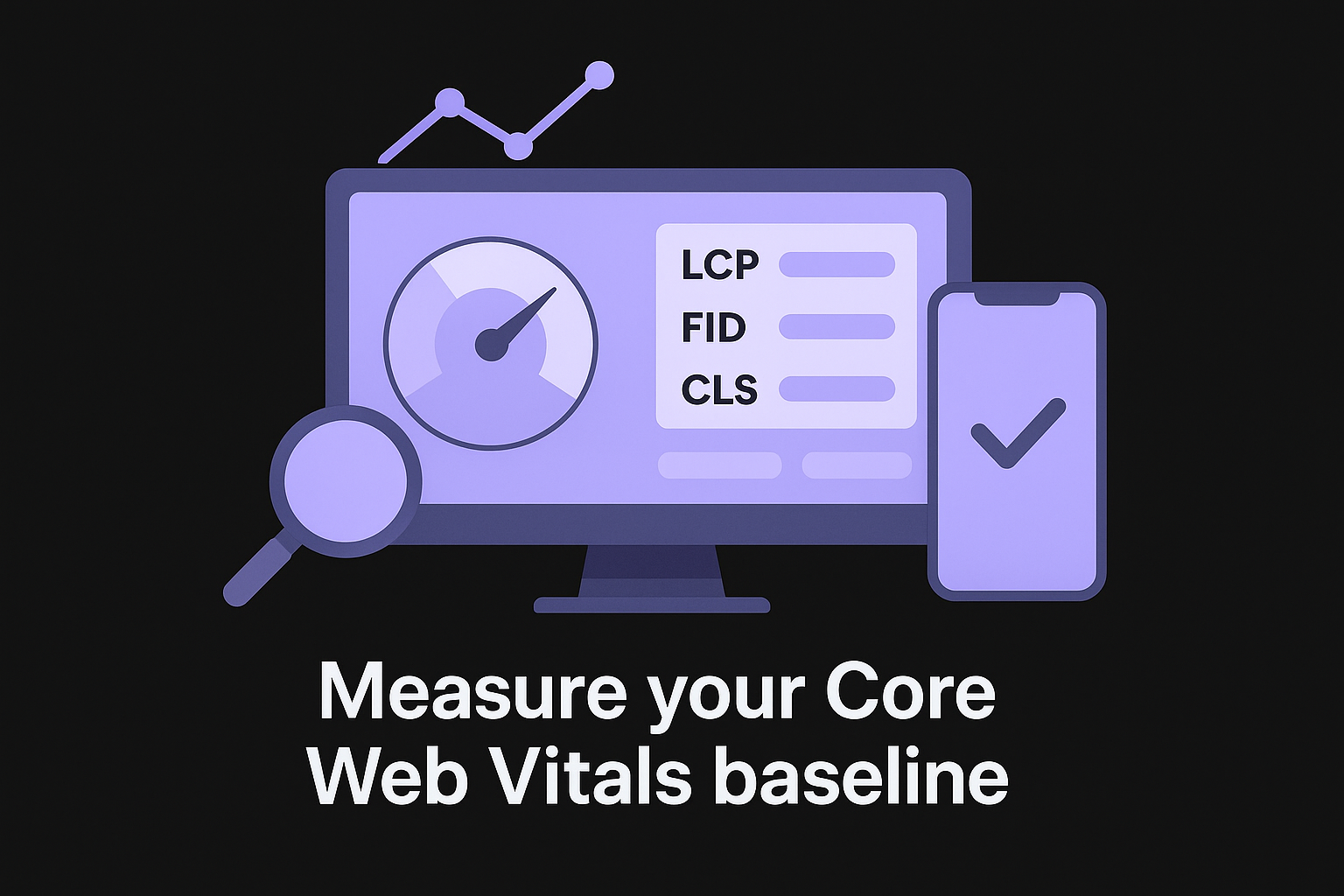

Measure your Core Web Vitals baseline

Run your site through Google PageSpeed Insights to get your performance scores. This tool measures three Core Web Vitals: Largest Contentful Paint (LCP, should be under 2.5 seconds), First Input Delay (FID, under 100 milliseconds), and Cumulative Layout Shift (CLS, under 0.1). Your scores appear for both mobile and desktop, with specific recommendations for each metric.

Check your real-world data in Search Console under the Core Web Vitals report. This shows actual user experience from Chrome users who visit your site, categorized as Good, Needs Improvement, or Poor. Focus on URLs in the Poor category first, as these hurt your rankings most severely.

Fixing Core Web Vitals improves both your search rankings and conversion rates, making speed optimization a double win for your business.

Fix page speed issues

Compress your images using modern formats like WebP, which reduce file sizes by 25-35% compared to JPEG without losing quality. Implement lazy loading so images below the fold only load when users scroll down. Add width and height attributes to image tags to prevent layout shifts.

Enable browser caching by setting proper cache headers on your server. Static resources like CSS, JavaScript, and images should cache for at least 30 days. Minify your CSS and JavaScript files to remove unnecessary spaces, comments, and code. Most hosting providers and CDNs offer automatic minification.

Use a Content Delivery Network (CDN) to serve files from servers geographically closer to your visitors. CDNs reduce latency and improve load times globally, especially for international audiences. Popular options include Cloudflare, which offers a free tier with basic optimization features.

Optimize for mobile users

Test your site with Google's Mobile-Friendly Test tool to identify mobile usability issues. Common problems include text too small to read, clickable elements too close together, and content wider than the screen. Your viewport meta tag should look like this:

<meta name="viewport" content="width=device-width, initial-scale=1">

Implement responsive design so your layout adapts to different screen sizes automatically. Use flexible grids, fluid images, and CSS media queries to adjust content based on device width. Ensure touch targets (buttons, links) are at least 48x48 pixels to prevent mis-taps. Avoid pop-ups that cover content on mobile, as Google penalizes intrusive interstitials that block access to your main content.

Step 5. Add structured data and resolve technical errors

Structured data helps search engines understand your content type and display it as rich results in search, while unresolved technical errors silently tank your rankings. This final step in learning how to do technical SEO addresses both issues. You're adding semantic markup that enhances your SERP appearance and eliminating broken elements that block crawlers or frustrate users. These fixes often deliver quick wins because many sites overlook them entirely.

Implement schema markup for rich results

Schema markup uses JSON-LD format to tell search engines what your content represents, whether that's an article, product, recipe, or business listing. Add this code to your page's <head> section or footer. Google recommends JSON-LD over microdata because it's cleaner and easier to maintain. Here's a basic article schema template:

<script type="application/ld+json">

{

"@context": "https://schema.org",

"@type": "Article",

"headline": "Your Article Title",

"author": {

"@type": "Person",

"name": "Author Name"

},

"datePublished": "2026-03-02",

"image": "https://yourdomain.com/image.jpg",

"publisher": {

"@type": "Organization",

"name": "Your Site Name",

"logo": {

"@type": "ImageObject",

"url": "https://yourdomain.com/logo.jpg"

}

}

}

</script>

Test your markup using Google's Rich Results Test to verify it's valid and eligible for enhanced display. Focus on schema types relevant to your content: Organization, Product, FAQ, HowTo, Review, or BreadcrumbList. Each type unlocks different SERP features like star ratings, price displays, or expandable FAQs.

Structured data doesn't directly improve rankings, but it increases click-through rates by making your results more visually prominent in search.

Find and fix technical errors

Run regular audits using Google Search Console to catch errors before they damage rankings. Check the Mobile Usability report for touch element sizing issues, viewport problems, and content width errors. Review the Security Issues section for malware warnings or hacked content notifications that trigger safe browsing alerts.

Monitor your HTTPS implementation by verifying your SSL certificate is valid and doesn't expire soon. Fix mixed content warnings where some page elements load over HTTP instead of HTTPS by updating resource URLs. Address 404 errors reported in Search Console by redirecting dead links or restoring deleted pages. Track server errors (5xx codes) that indicate hosting instability, working with your provider to resolve downtime or timeout issues. Your site should maintain 99%+ uptime for consistent crawling and indexing.

Next steps to keep your site healthy

Technical SEO isn't a one-time project, it's ongoing maintenance that protects your rankings. Schedule monthly audits using Google Search Console to catch crawl errors, indexing drops, or Core Web Vitals regressions before they hurt traffic. Set up automated monitoring for critical metrics like uptime, page speed, and mobile usability scores. Review your sitemaps quarterly to ensure new pages get indexed quickly.

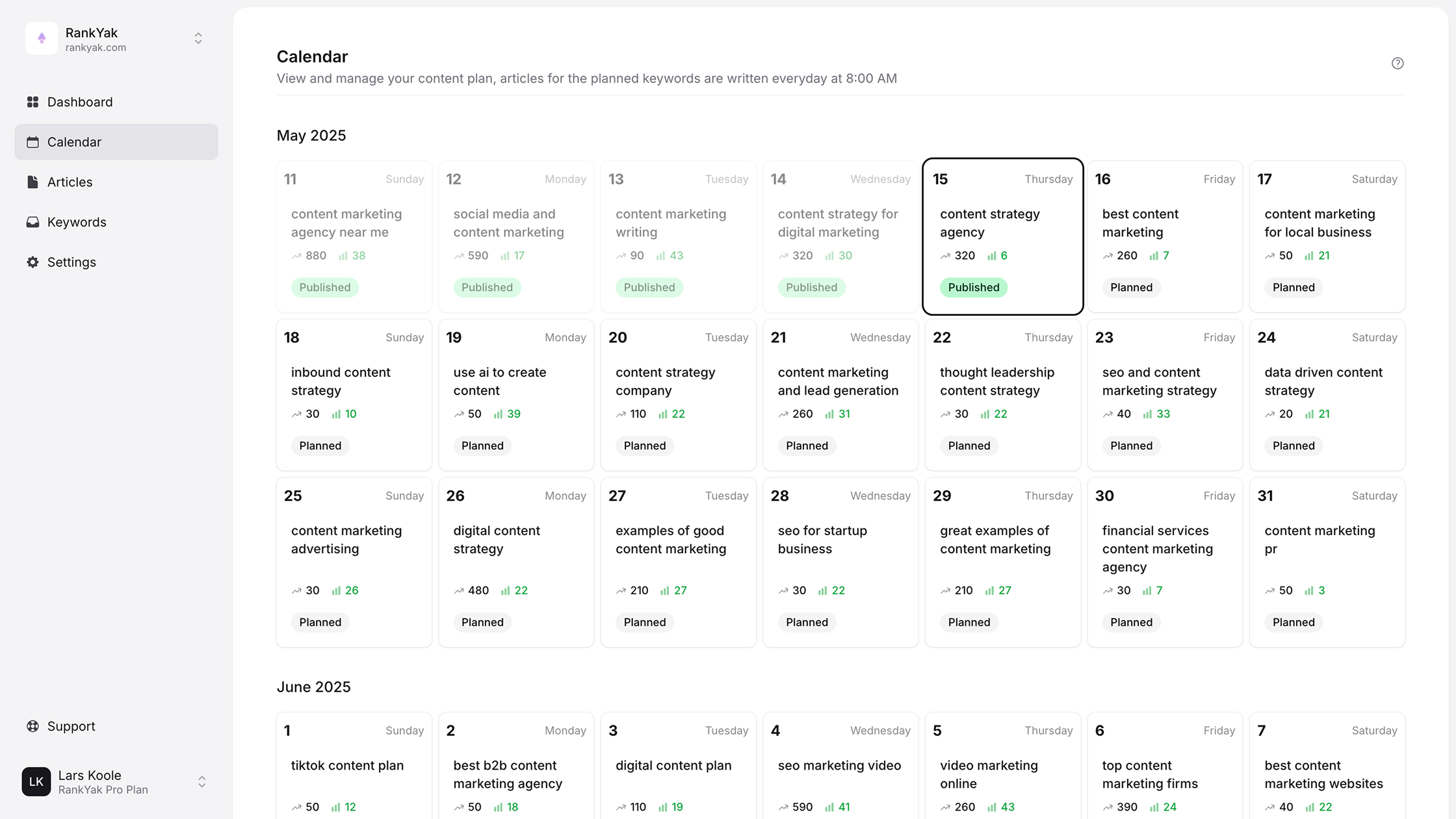

Now that you know how to do technical SEO, the real challenge is keeping up with content creation while maintaining these optimizations. That's where automation becomes essential. RankYak handles the entire content lifecycle, from keyword research to publishing SEO-optimized articles daily, freeing you to focus on technical foundations. Your site gets consistent, high-quality content that's already optimized for search engines and AI platforms.

Start your free 3-day trial at RankYak to see how automated SEO works alongside your technical optimizations.

Get Google and ChatGPT traffic on autopilot.

Start today and generate your first article within 15 minutes.

SEO revenue calculator

How much revenue is your website leaving on the table?

Take a quick quiz and see exactly how much organic revenue you're missing out on, along with personalized tips to fix it.

-

4 questions, under 1 minute

-

See traffic and revenue potential

-

No email required

Free · takes 1 minute · no signup needed

Question 1 of 4

Question 2 of 4

Question 3 of 4

Question 4 of 4

Your SEO growth potential

Extra visitors / month

after 6-12 months of consistent publishing

Revenue potential / year

at your niche's avg. conversion rate

Articles needed (12 mo)

to reach this traffic level

ROI with RankYak

at $99/mo ($1,188/year)

To hit that number, you'd need to:

- Build a topical authority strategy for your niche

- Research keywords & map out a full topical cluster

- Write, edit & publish an article every single day

- Build backlinks to the articles you publish

RankYak handles all of this automatically, every day.

* Estimates based on industry averages. Results vary by niche, competition, and domain authority. Most SEO results become visible after 3-6 months of consistent publishing.